INTELLIGENT AGENTS

Artefacts from this module

Background

This section of the e-portfolio documents my learning and development throughout the machine learning module, aligning with the specified requirements and grading criteria. It includes artefacts and reflections that demonstrate my progress, contributions, and insights gained during the module.

Weekly Entries

Theory

Trends in Computing

We started this module by exploring five major trends in modern computing:

- Ubiquity: Smart technologies have become increasingly affordable and prevalent, leading to widespread adoption in everyday devices—from IoT applications to voice assistants like Alexa and Siri.

- Interconnection: The digital world is more connected than ever, driven by the proliferation of the internet, mobile computing, and 5G networks, with over 14.4 billion devices now connected globally.

- Intelligence: The complexity of tasks we can automate continues to increase. Machines now handle operations that were previously unimaginable, thanks to hardware advances and sophisticated algorithms.

- Delegation: We increasingly delegate tasks—sometimes even safety-critical ones like aircraft control—to automated systems, trusting them over human operators in many scenarios.

- Human-Orientation: Interaction models have shifted from machine-centric interfaces (e.g., punch cards) to more intuitive methods like touch and voice, improving accessibility and user experience.

Introduction to Agents

We also examined the concept of an agent in general: a system capable of taking independent actions to meet objectives within its defined environment.

- Agents perceive their environment using sensors and act through effectors.

- They operate with varying degrees of autonomy from passive services (like APIs) to semi-autonomous and fully autonomous systems.

- Agent actions often have preconditions. For instance, the action “pick up cat” only makes sense if a cat is present and willing, and if the agent can lift it.

Contributions & Artefacts

First Weekly Discussion - Initial Post

Growing system complexity, distributed environments and the limitations of traditional centralized architectures are just some of the reasons for the rise of agent-based systems. Today digital ecosystem has become ever more interconnected and unpredictable which increased the need for autonomous and adaptive systems. Agent-based computing emerged to address these challenges by offering systems that can operate independently. They interact with one another and take decisions by themselves (Russel and Norvig, 2021).

Scalability, modularity and robustness are just some of the factors that motivate companies to introduce agent-based systems. In sharp contrast to traditional procedural or object-oriented systems, agents can represent goal-oriented entities that work decentralized and are capable of negotiation, cooperation, as well as conflict resolution by themselves. Agent-based systems are especially valuable in dynamic fields like supply chain management, logistic and coordination, financial markets and smart power grids. All those examples share complex environments that can be supported by system taking over decisions for stakeholders or simply simulate real-world complexity of the environment for improving processes and procedures.

There are various agent models in use today. On one side there are reactive agents that respond to environmental inputs without internal models and on the other hand there are deliberative agents that use symbolic reasoning and planning. Hybrid models combine both approaches for flexibility and responsiveness (Jennings and Wooldridge, 1998). Learning agents, often supported by reinforcement learning or evolutionary algorithms, further extend this paradigm by adapting over time to improve performance.

In summary, agent-based systems reflect a shift from traditional automation toward decentralised, intelligent coordination.

Reflection

Weekly Reflection

This week introduced me to the broader trends in computing and the concept of agents. I realised how decentralisation and autonomy make agents valuable in increasingly complex environments, which linked well to the first discussion on why agent-based systems are emerging.

References

- University of Essex Online (2025) ‘Introduction to Agents-Based Computing’ [Online learncast]. In: Intelligent Agents.

- Wooldridge, M.J. (2009) An Introduction to Multiagent Systems. Chichester: John Wiley & Sons.

- Russell, S.J. and Norvig, P., 1995. Artificial intelligence: A modern approach;[the intelligent agent book] (pp. I-XXVIII). Prentice hall.

- Jennings, N. and Wooldridge, M.J. eds., 1998. Agent technology: foundations, applications, and markets. Springer Science & Business Media.

Theory

Artificial Intelligence: A Modern Approach

Chapter 8 of Artificial Intelligence: A Modern Approach (Russell & Norvig, 2021) is about First-Order Logic (FOL) extending propositional logic to represent objects, relations, and quantifiers so that AI systems can express and reason about the general structure of the world. The chapter introduces the syntax and semantics of FOL, showing how it can represent facts about objects, their properties, and relationships. Russell & Norvig discuss how to convert English sentences into FOL expressions, the role of equality, and how variables, terms, and functions work. They also cover the expressiveness of FOL compared to propositional logic, showing how it can model general rules (e.g., “All humans are mortal”) rather than just specific facts. The chapter closes with guidance on building knowledge bases in FOL and preparing them for inference in later chapters.

Contributions & Artefacts

First Weekly Discussion - Reponses

Peer Response 1

Hi Jordan

Thank you very much for your contribution I like how you outlined how agent-based systems differ from traditional approaches, especially in highlighting the emergent behaviour that results from simple agent rules. Your point about their suitability for modelling complex, interconnected systems resonates strongly with current research trends.

I would like to expand on the area that looks at the link between these capabilities and organisational resilience. Jennings and Bussmann (2003) highlight that agent-based systems perform particularly well in environments with high uncertainty, as their decentralised structure enables them to adapt to disruptions without being slowed down by centralised decision-making. This is especially useful when talking about supply chain logistics, where agents can adjust routes or priorities on the fly when unexpected events occur. This could, for example, be used in large warehouses like Amazon warehouses to increase profitability by reducing process time and decreasing manual labor. As Russell and Norvig (2021) point out, incorporating learning mechanisms allows agents to improve over time, creating long-term performance gains that static rule-based agents cannot match.

Peer Response 2

Hi Abdulla

Thank you for your contribution. You have clearly laid out the organisational drivers behind the rise of agent-based systems, especially the link between decentralisation and adaptability. I think your point about ABS reflecting a real world phenomena through agent to agent interaction is key, as this mirrors how many modern systems actually operate in complex environments.

Proselkov et al. (2024) show that in adaptive supply networks, decentralised decision making lets agents react to disruptions immediately. They are not relying on central coordination which increases reaction time significantly. This is especially important where reaction time is a critical factor. Macal and North (2010) note that ABS can run what-if scenarios in ways traditional top-down methods cannot, giving organisations a safe space to test strategies before rolling them out.

I also share your point on predictive capabilities. As Russell and Norvig (2021) explain, adding learning mechanisms allows agents to improve their decision making over time, making resource use and operations more efficient and turning ABS from a fixed automation tool into a intelligent adaptive asset.

Reflection

Weekly Reflection

Engaging with First-Order Logic was challenging but rewarding. The peer discussion on decentralisation and resilience helped me see how logical formalism underpins the adaptability of agents in uncertain environments.

References

- Jennings, N.R. and Bussmann, S., 2003. Agent-based control systems. IEEE control systems, 23(3), pp.61-74.

- Stuartl, R. and Peter, N., 2021. Artificial intelligence: a modern approach. Artificial Intelligence: A Modern Approach.

- Proselkov, Y., Zhang, J., Xu, L., Hofmann, E., Choi, T.Y., Rogers, D. and Brintrup, A., 2024. Financial ripple effect in complex adaptive supply networks: an agent-based model. International Journal of Production Research, 62(3), pp.823-845.

- Macal, C.M. and North, M.J., 2005, December. Tutorial on agent-based modeling and simulation. In Proceedings of the Winter Simulation Conference, 2005. (pp. 14-pp). IEEE.

- Stuartl, R. and Peter, N., 2021. Artificial intelligence: a modern approach. Artificial Intelligence: A Modern Approach.

Theory

Agent Based Systems

This week we explored how agents are structured and how they operate within an environment. At the core of every agent lies its architecture. Architecture is the combination of the software program and the hardware or platform on which it runs. According to Maes and Kaelbling, architectures decompose an agent into interacting modules, defining how percepts from the environment and the agent’s internal state map to actions. We studied different types of agents: symbolic reasoning agents that use explicit models and logical inference, reactive agents that rely on direct stimulus–response patterns, and hybrid agents that combine both approaches. Symbolic agents provide powerful reasoning but struggle with real-world translation and computational complexity, known as the transduction and representation problems. This has motivated the rise of reactive and hybrid architectures, which focus on responsiveness and adaptability.

Practical Reasoning Architecture

A key concept introduced was practical reasoning, where agents reason not just about what is true, but about what to do. Bratman’s theory of Beliefs, Desires and Intentions (BDI) provides a model for such reasoning. Agents deliberate to decide on goals, then apply means–end reasoning to generate plans. Intentions introduce the crucial element of commitment: while desires represent possibilities, intentions are the chosen goals that guide the agent’s behaviour. This architecture enables agents to pursue complex objectives but also raises challenges, such as the side-effect problem where unintended consequences can arise from pursuing intentions. We also examined reactive subsumption architectures, where simple behaviours are layered to create emergent intelligence, as illustrated by Brooks’ robots and Reynolds’ flocking model. Together, these perspectives highlight the trade-offs between symbolic control, practical reasoning, and emergent reactive behaviour in designing intelligent agents.

Contributions & Artefacts

First Weekly Discussion - Summary Post

Our discussions about agent-based systems highlighted both the drivers behind their rise and the opportunities and risks they bring to organisations. My initial post emphasised how increasing system complexity, distributed environments, and the limitations of centralised architectures have created demand for decentralised, autonomous, and adaptive systems. Agent-based systems address these needs by providing scalability, modularity, and robustness, with applications ranging from supply chain logistics to financial markets (Russell & Norvig, 2021; Jennings & Wooldridge, 1998).

Peer contributions broadened this perspective. Paul underlined the importance of advances in computational resources and networking, noting that distributed infrastructures and reinforcement learning have strengthened the case for ABS adoption (Macal & North, 2014; Silver et al., 2021). He also stressed the significance of emergent behaviour, where complex outcomes arise from local interactions (Bonabeau, 2002). Jordan similarly highlighted emergent dynamics, but also raised the risks of negative emergent effects in critical environments (Altmann et al., 2024). To mitigate these, peers pointed to measures such as human-in-the-loop oversight (Wu et al., 2023) and formal verification (Akhtar, 2015).

Overall, the discussion reaffirmed that ABS are not just an alternative to traditional computing but a paradigm shift towards distributed intelligence.

Reflection

Weekly Reflection

Exploring agent architectures deepened my understanding of the trade-offs between symbolic reasoning, reactive patterns, and hybrid designs. The summary discussion showed me how peers connected emergent behaviour and human-in-the-loop oversight to practical applications.

References

- University of Essex Online (2025) ‘Agent Architectures’ [Online learncast]. In: Intelligent Agents.

- Silver, D., Singh, S., Precup, D. and Sutton, R.S., 2021. Reward is enough. Artificial intelligence, 299, p.103535.

- Macal, C.M. and North, M.J., 2014. Introductory tutorial: Agent-based modeling and simulation. Proceedings of the Winter Simulation Conference, pp.6–20. https://doi.org/10.1109/WSC.2014.7019874

- Bonabeau, E., 2002. Agent-based modelling: Methods and techniques for simulating human systems. Proceedings of the National Academy of Sciences, 99(suppl 3), pp.7280–7287. https://doi.org/10.1073/pnas.082080899

- Altmann, P., Schönberger, J., Illium, S., Zorn, M., Ritz, F., Haider, T., Burton, S. and Gabor, T. (2024) Emergence in Multi-Agent Systems: A Safety Perspective. arXiv:2408.04514v1 [cs.MA].

- Wu, J., Huang, Z., Hu, Z. and Lv, C. (2023) 'Toward Human-in-the-Loop AI: Enhancing Deep Reinforcement Learning via Real-Time Human Guidance for Autonomous Driving', Engineering, 21, pp. 75-91. doi: 10.1016/j.eng.2022.05.017.

- Akhtar, N. (2015). Requirements, Formal Verification and Model transformations of an Agent-based System: A CASE STUDY. arXiv. https://doi.org/10.48550/arXiv.1501.05120

Theory

Reactive and Hybrid Agents

This chapter looks at how agents can be designed without depending on complex symbolic reasoning. Reactive agents follow a straightforward stimulus–response pattern: they sense their environment and act right away, without holding an internal symbolic model. Wooldridge discusses architectures like Brooks’ subsumption model, where layered behaviours (such as obstacle avoidance, wandering, or exploration) can override one another, producing adaptive and resilient behaviour. Reactive agents stand out for their simplicity, speed, and reliability in fast-changing environments, but they are limited when it comes to long-term planning or handling abstract problems.

To address this, hybrid architectures are introduced, blending reactive mechanisms with deliberative reasoning. These agents are usually built with multiple layers a planning layer, a reactive layer for immediate action, and coordination in between. Examples include TouringMachines and InteRRaP, both designed to combine efficiency with adaptability.

The key takeaway is that no single approach is universally best. Reactive designs are powerful in dynamic, uncertain domains, while hybrid models offer a balance, managing both immediate responses and longer-term goals.

Reflection

Weekly Reflection

This week clarified how reactive and hybrid agents complement one another. I understood that neither is a universal solution, and hybrid approaches like InteRRaP offer a balance of efficiency and adaptability.

References

- Wooldridge, M. J. (2009) An introduction to multiagent systems. (2nd ed). New York: John Wiley & Sons.

Theory

Agent Communication

Communication is central to multi-agent systems, as most tasks require agents to exchange information, request actions, or query one another. To formalise how agents communicate, researchers draw on speech act theory, first introduced by Austin (1962) and extended by Searle (1969). Speech acts treat language as actions that can change the world. Searle identified five categories:

- Representatives – stating facts (“It’s raining”).

- Directives – attempting to get someone to do something (“Pass the salt”).

- Commissives – committing to a future action (“I promise to help”).

- Expressives – expressing internal state (“Thank you”, “I feel sad”).

- Declarations – performing an action by speaking (“I now pronounce you married”, “We declare war”).

Each speech act has two parts: the performative verb (intention, e.g. request, inform) and the propositional content (the actual information). For example, “The door is closed” could mean an observation, a request, or a question, depending on intention. Cohen and Perrault formalised these semantics with precondition–delete–add rules, ensuring agents make requests only when meaningful (e.g., not asking impossible actions).

Agent-Communication Languages

To operationalise this in multi-agent systems, Agent Communication Languages (ACLs) provide a protocol for structured interaction. One example is KQML (Knowledge Query and Manipulation Language), which defines performatives like ask-if (query truth), tell (provide information), perform (request an action), and reply (answer a query). KQML works alongside KIF (Knowledge Interchange Format), a logic-based language for expressing the actual message content.

Successful communication also requires a shared vocabulary, which is provided by ontologies. Ontologies define concepts, categories, and relationships in a domain (e.g., in the Block World ontology: On(x,y), OnTable(x), Clear(x)). Actions are described with preconditions, deletions, and additions (e.g., Stack(x,y) requires Clear(y) and Holding(x)). A challenge is semantic heterogeneity – different agents may use different ontologies. To address this, ontology alignment methods identify correspondences between terms, while approaches like Correspondence Inclusion Dialogue (CID) allow agents to negotiate shared meaning without revealing full internal ontologies.

Contributions & Artefacts

Second Weekly Discussion - Initial Post

Agent Communication Languages (ACLs), such as Knowledge Query and Manipulation Language (KQML) were designed to standardise the way autonomous agents exchange information.

Their main advantage is the high-level abstraction they provide. Rather than just passing data, agents can exchange speech acts such as inform, request, or propose, which embed semantics about intent (Labrou and Finin, 1997). This facilitates cooperation, negotiation, and knowledge sharing in distributed systems, especially when agents are heterogeneous and developed independently from each other.

Compared to direct method using programming languages such as Python or Java, ACLs offer greater flexibility and interoperability. In a traditional method call, the sender must know the exact interface and implementation details of the receiver as the call may otherwise not work properly or not at all. In contrast, ACLs aim at decouple communication from implementation, allowing agents to learn to understand each other dynamically and interpret messages according to an agreed ontologies (Wooldridge, 2009).

When it comes to disadvantages, parsing and interpreting high-level speech acts incurs additional computational overhead as there is no simple structure that allows every call to look the same as known from APIs (Paurobally, 2007). Furthermore, achieving true semantic interoperability requires shared ontologies, which can be difficult to standardise across domains.

Reflcetion

Weekly Reflection

Agent communication and speech act theory made me appreciate the complexity of enabling cooperation. Writing my initial discussion post showed me the benefits and limitations of ACLs in comparison to traditional method calls.

References

- University of Essex Online (2025) ‘Agent Communication’ [Online learncast]. In: Intelligent Agents.

- Labrou, Y. and Finin, T., 1994, November. A semantics approach for KQML—a general purpose communication language for software agents. In Proceedings of the third international conference on Information and knowledge management (pp. 447-455).

- Paurobally, S., Tamma, V. and Wooldrdige, M., 2007. A framework for web service negotiation. ACM Transactions on Autonomous and Adaptive Systems (TAAS), 2(4), pp.14-es.

Theory

KQML as an agent communication language

Finin et al. (1994) introduce the Knowledge Query and Manipulation Language (KQML) as a general-purpose communication language for software agents. The paper outlines KQML’s design as a protocol for exchanging information and knowledge in distributed, heterogeneous environments. It emphasises how KQML supports “performatives,” or high-level message types such as ask, tell, and achieve, grounded in speech act theory, which allow agents to communicate not just data but intent. The authors highlight KQML’s flexibility for knowledge sharing, negotiation, and coordination, while also acknowledging challenges around defining precise semantics and ensuring interoperability across diverse agent systems.

Contributions & Artefacts

Second Weekly Discussion - Peer Responses

Peer Response 1

Hi Nikos, thank you for your interesting post and also for your thoughtful response to my own contribution. I appreciate how you expanded on the grounding of ACLs in speech act theory and the role of conversation policies in structuring multi-step dialogues. This highlights well the contrast to simple method invocation, which lacks both semantics and protocol-level coherence. Your point about inconsistent implementations of KQML also resonates with me. Without well-defined semantics, it becomes difficult to guarantee interoperability in heterogeneous environments (Finin et al., 1994).

Building on your initial post, I found your emphasis on autonomy particularly important. As you note, method calls in object-oriented programming enforce deterministic execution, while ACLs allow agents to interpret messages according to their goals and constraints. This flexibility aligns closely with Wooldridge’s (2009) distinction between objects acting because they are invoked versus agents acting because they choose to. The autonomy dimension not only adds realism but also supports negotiation-based problem solving in distributed environments (Jennings, Sycara and Wooldridge, 1998).

At the same time, I agree with your caution regarding ontology alignment. Shared vocabularies are essential for semantic interoperability, and mismatches can undermine otherwise well-structured conversations. This is a risk that must be considered and taken care (Payne and Tamma, 2014).

Peer Response 2

Hi Mohamed, thank you for your clear and well-structured post. I especially appreciated how you contrasted the abstraction and semantic richness of ACLs with the efficiency and clarity of direct method invocation. Your point about ACLs enabling intentions such as inform, ask or recommend rather than just raw data transfer really captures the essence of why they were designed in the first place (Finin, Labrou and Mayfield, 1994).

I would like to build on your observation about efficiency. While it is true that parsing ACL performatives adds computational overhead, research suggests that this trade-off is justified in environments where negotiation and autonomy are critical. Jennings, Sycara and Wooldridge (1998) argue that ACLs enable distributed problem solving in ways that direct invocation cannot, because they allow agents to flexibly decide how to respond, rather than being constrained to execute a predetermined method. Of course, this means that one must decide actively whether to choose this trade-off or not.

Your discussion of ontology alignment also stood out and was very interesting. Labrou and Finin (1997) indeed highlight this as one of the biggest challenges in ACL use. More recent work in semantic web technologies has tried to address this problem through ontology matching and mediation frameworks (Shvaiko and Euzenat, 2013). These tools can reduce miscommunication but, as you noted, they further increase complexity and computational effort.

Overall I fully agree with your conclusion that ACLs might outperform other methods but come with larger costs, which means they are not the perfect fit in all scenarios.

Creating Agent Dialogues

The exercise was to create a short KQML dialogue (with KIF content) where Alice, a procurement agent, asks Bob, a warehouse agent, whether 50-inch TVs are in stock, how many are available, and how many HDMI ports they have.

Alice asks bom if any 50 inch TVs are at stock:

(kqml

:performative ask-if

:sender Alice

:receiver Bob

:language KIF

:ontology warehouse-ontology-v1

:reply-with q1

:content

"(exists (?t ?q)

(and (television ?t)

(= (size-inch ?t) 50)

(stock-qty ?t ?q)

(> ?q 0)))")

Bob returns confirmation of availability:

(kqml

:performative tell

:sender Bob

:receiver Alice

:in-reply-to q1

:language KIF

:ontology warehouse-ontology-v1

:content "true")

Alice asks of Bob about the HDMI ports:

(kqml

:performative ask-all

:sender Alice

:receiver Bob

:language KIF

:ontology warehouse-ontology-v1

:reply-with q3

:content

"(setof (?m ?k)

(exists (?t)

(and (television ?t)

(= (size-inch ?t) 50)

(model ?t ?m)

(hdmi-ports ?t ?k))))")

Bob provides a report with all HDMI ports per-model:

(kqml

:performative tell

:sender Bob

:receiver Alice

:in-reply-to q3

:language KIF

:ontology warehouse-ontology-v1

:content

"(= (setof (?m ?k)

(exists (?t)

(and (television ?t)

(= (size-inch ?t) 50)

(model ?t ?m)

(hdmi-ports ?t ?k))))

{ (\"Samsung-QN90B-50\" 4)

(\"LG-C3-50\" 4)

(\"Sony-X90L-50\" 2) })")

*I used an LLM to facilitate the creation of the KQML queries.

Reflection

Weekly Reflection

Studying KQML in depth highlighted how autonomy and semantics are built into agent communication. The peer responses and the KQML dialogue exercise gave me hands-on experience of how structured interaction works in practice.

References

- Finin, T., Fritzson, R., McKay, D. and McEntire, R., 1994, November. KQML as an agent communication language. In Proceedings of the third international conference on Information and knowledge management (pp. 456-463).

- Wooldridge, M., 2009. An introduction to multiagent systems. John wiley & sons.

- Jennings, N.R., Sycara, K. and Wooldridge, M., 1998. A roadmap of agent research and development. Autonomous agents and multi-agent systems, 1(1), pp.7-38.

- Payne, T.R. and Tamma, V., 2014, May. Negotiating over ontological correspondences with asymmetric and incomplete knowledge. In Proceedings of the 2014 international conference on Autonomous agents and multi-agent systems (pp. 517-524).

- Labrou, Y. and Finin, T. (1997) Semantics and conversations for an agent communication language. In: Proceedings of the 15th International Joint Conference on Artificial Intelligence. San Francisco: Morgan Kaufmann, pp. 584–591.

- Shvaiko, P. and Euzenat, J. (2013) ‘Ontology matching: state of the art and future challenges’, IEEE Transactions on Knowledge and Data Engineering, 25(1), pp. 158–176. https://doi.org/10.1109/TKDE.2011.253

Theory

Introduction to NLP

Humans are unique in using language to express limitless ideas, which is why the Turing Test focused on linguistic ability. Unlike formal languages (with crisp syntax and semantics), natural language is messy: grammar is flexible, meanings are probabilistic, and ambiguity is everywhere (polysemy, sarcasm, anaphora, “He saw her duck”). NLP exists to bridge this gap by enabling machines to interpret and communicate in our inherently noisy, context-dependent language that dominates today’s data.

Techniques for NLP

NLP draws on pragmatics (implicature, presupposition) and statistical methods like next-word prediction, underpinned by the distributional hypothesis (“a word is known by the company it keeps”). Word embeddings (e.g., word2vec) capture semantic/syntactic relationships and support similarity queries and vector arithmetic (king−man+woman≈queen); patterns like Hearst rules extract hyponym relations at scale. For deeper structure, formal approaches use context-free grammars with constituency parse trees, while dependency parsing models head-dependent relations between words as a graph.

Contributions & Artefacts

Second Weekly Discussion - Summary Post

Our discussion on Agent Communication Languages (ACLs) highlighted both their conceptual strengths and their practical limitations. My initial post stressed that this abstraction enables negotiation and cooperation across heterogeneous systems, contrasting with method invocation in Python or Java, which is efficient but tightly coupled. However, ACLs introduce overhead and depend heavily on shared ontologies, which can be difficult to maintain (Payne and Tamma, 2014).

Peer contributions deepened these points. Nikos emphasised the value of conversation policies for structuring multi-step dialogues, while highlighting interoperability challenges caused by inconsistent semantics. Mohamed contrasted ACLs with lightweight paradigms such as RPC, but noted that ontology alignment and mediation frameworks can reduce miscommunication in practice (Shvaiko and Euzenat, 2013).

Overall, the consensus was that ACLs are not a universal solution but a specialised tool that excels in certain parts where autonomy and negotiation is of value while it still is very costly and computationally complex.

Reflection

Weekly Reflection

Learning about NLP reminded me how uniquely human language is and why machines struggle with ambiguity. I found it interesting to contrast this with agent communication, and the discussion summary made clear that ACLs excel in specific but costly scenarios.

References

- University of Essex Online (2025) ‘Natural Language Processing (NLP)’ [Online learncast]. In: Intelligent Agents.

- Payne, T.R. and Tamma, V. (2014) ‘Negotiating over ontological correspondences with asymmetric and incomplete knowledge’, Autonomous Agents and Multi-Agent Systems, 28(1), pp. 1–35.

- Shvaiko, P. and Euzenat, J. (2013) Ontology matching. 2nd edn. Heidelberg: Springer.

Theory

Getting to Grips with Parse Trees

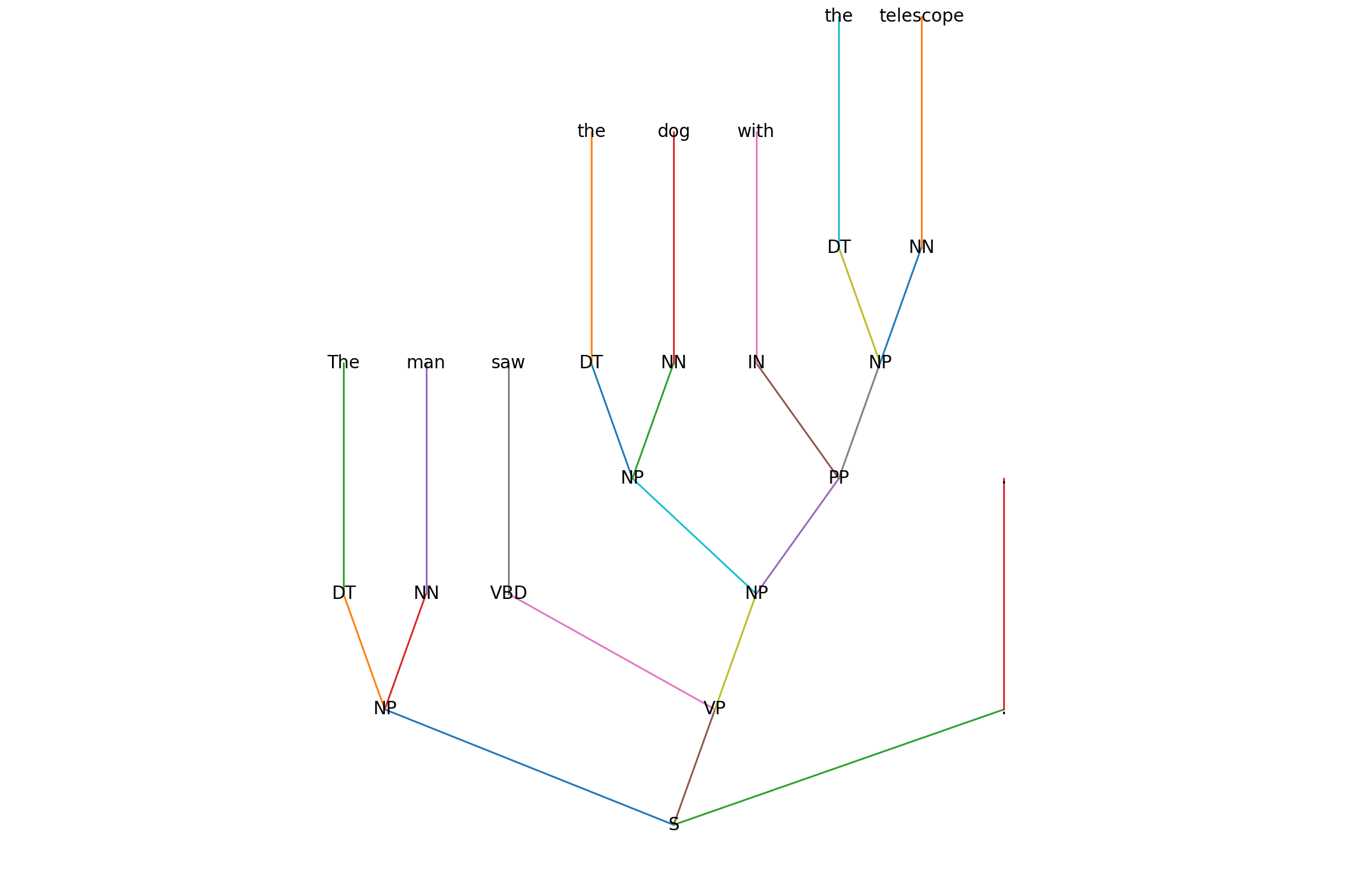

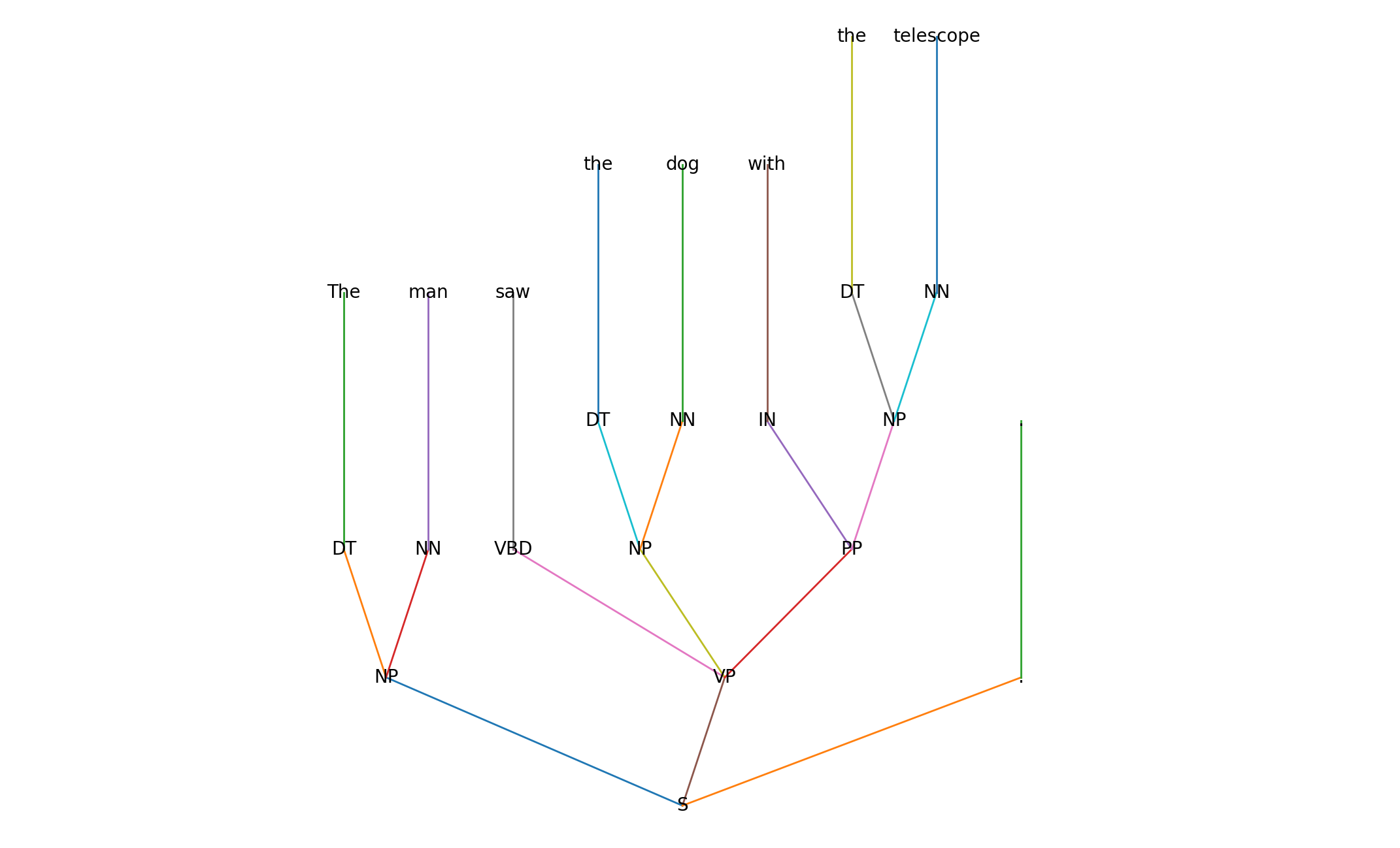

This week’s focus was on parse trees. Zimmerman (2019) explains parse trees as a way of making the hierarchical structure of language explicit. While sentences are written sequentially, parse trees represent them as directional graphs where tokens (syntactic words) are nodes and labelled edges show their grammatical relationships. Each tree has a root, usually the main verb, and subtrees that correspond to phrases. Nodes carry part-of-speech (POS) or full TAG labels, while edges use syntactic dependency labels such as nsubj (subject) or amod (adjective modifier).

Contributions & Artefacts

Creating Parse Trees

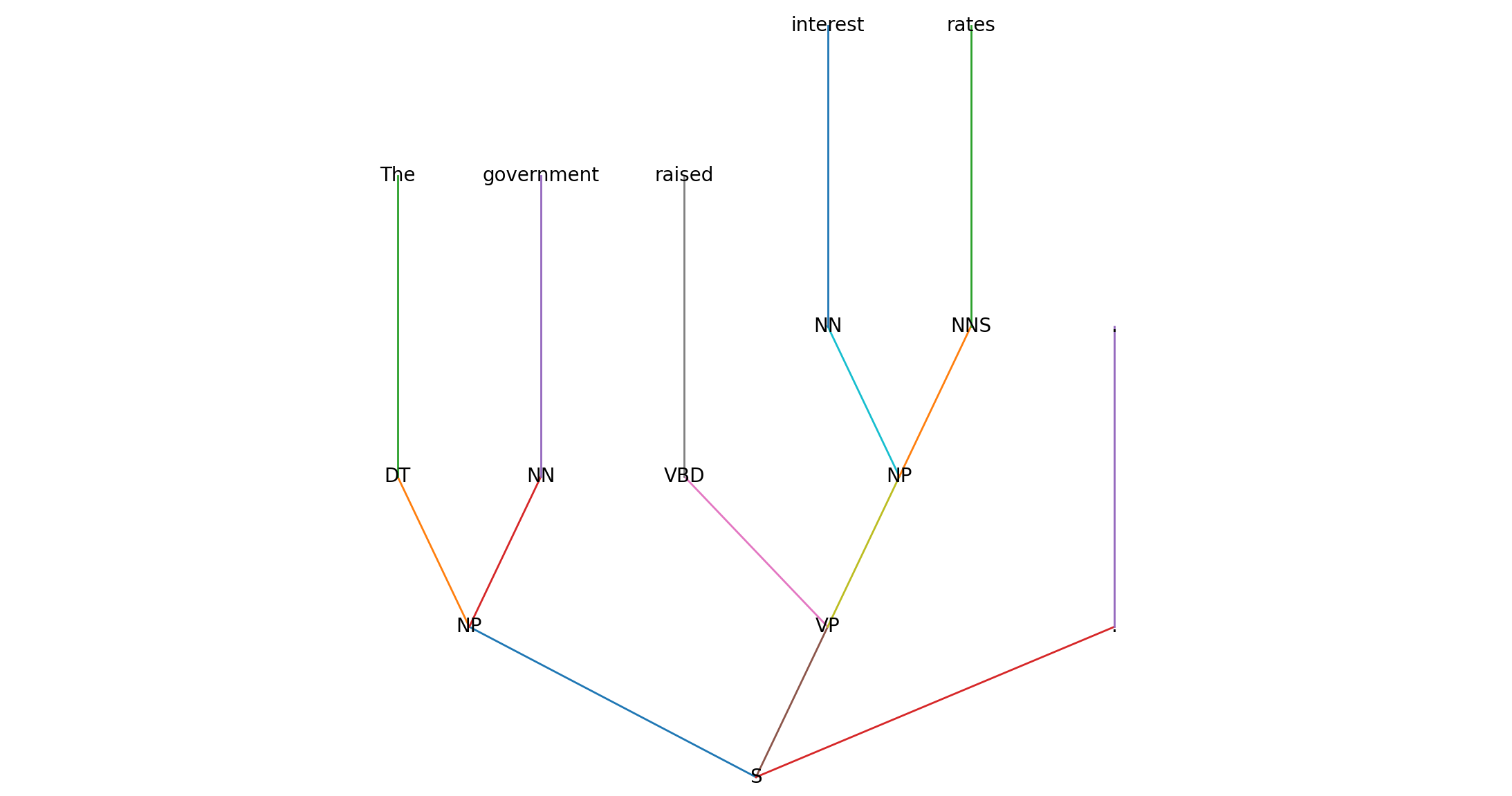

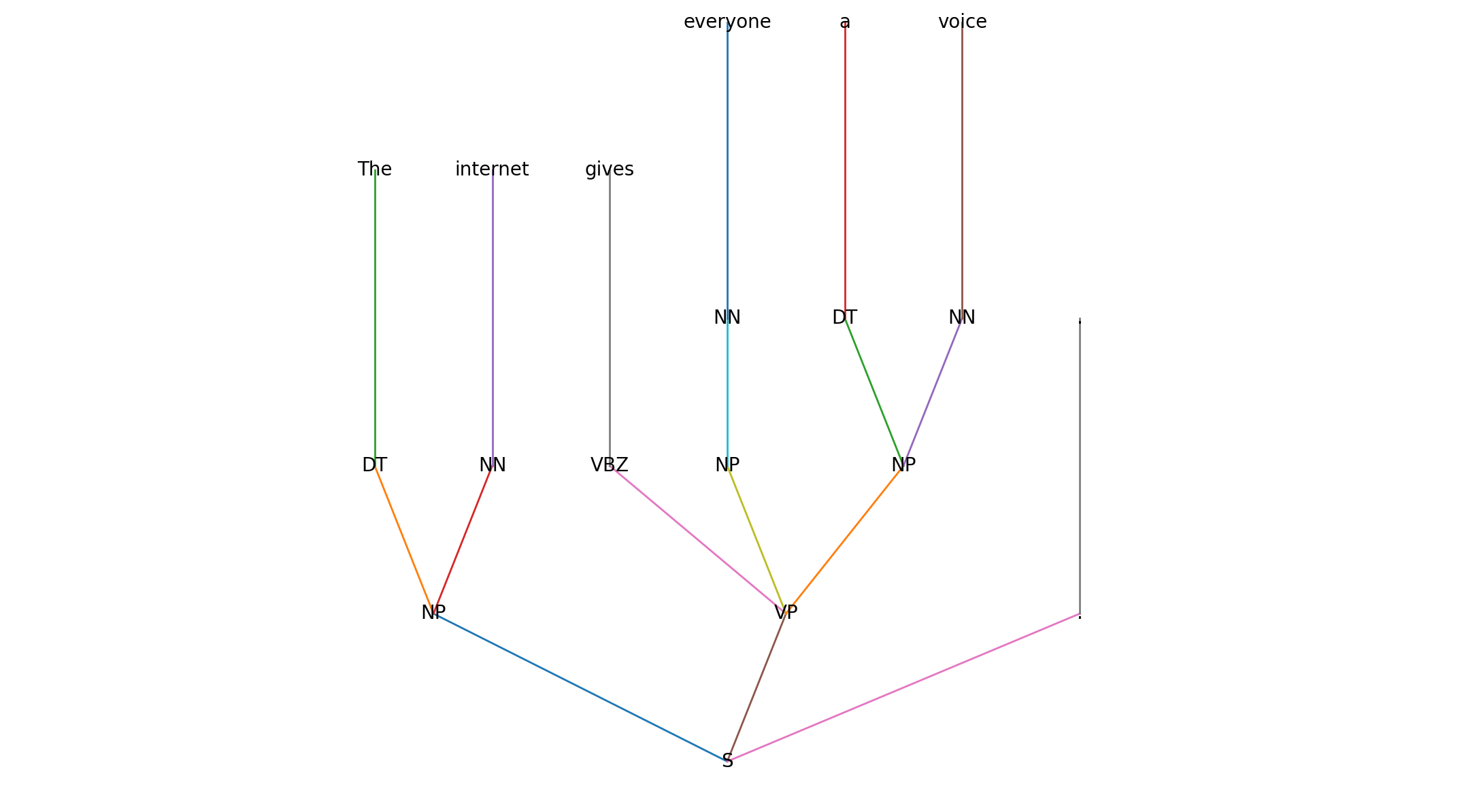

We read Zimmermann's (2019) explanation which introduced parse trees followed by an exercise. The goal of the activity was to create parse trees for three specific phrases:

- The government raised interest rates.

- The internet gives everyone a voice.

- The man saw the dog with the telescope.

In a first step, we define the syntactic parts of a sentence using short labels (e.g., S, NP, VP, PP). Then we build a constituency tree following standard grammar conventions (Penn Treebank style): a single S (sentence) node at the top, branching into phrases (NP, VP, etc.), with terminals (the actual words) at the leaves. Finally, we render the tree and show the result.

We then define the labels and their meaning:

| Label | Meaning | Example |

|---|---|---|

| S | Sentence (clause) | entire sentence |

| NP | Noun Phrase | The government, a voice |

| VP | Verb Phrase | raised interest rates |

| PP | Prepositional Phrase | with the telescope |

| DT | Determiner | the, a |

| NN | Noun, singular | government, dog |

| NNS | Noun, plural | rates |

| VBD | Verb, past tense | raised, saw |

| VBZ | Verb, 3rd person sing. present | gives |

| IN | Preposition | with |

| . | Sentence-final punctuation | . |

Then we build the tree:

- Tokenize & tag: assign POS tags to each word (DT, NN, VBD, …).

- Group into phrases: form NPs, VPs, PPs according to grammar rules.

- Assemble the sentence: create a single S node at the top; typically S → NP VP plus punctuation.

- Attach modifiers: e.g., PPs can modify an NP or a VP (this can be ambiguous).

- Render the tree: draw the hierarchy so phrases and words are visible as a tree.

Those are the results:

Reflection

Weekly Reflection

The parse tree activity helped me connect grammar rules with structured representations. Building trees and visualising different attachment interpretations clarified how ambiguity is handled computationally.

References

- Zimmerman, V. (2019) Towards Data Science. Getting to Grips with Parse Trees.

Theory

Artificial Neural Networks (ANNs)

ANNs are adaptive learning models inspired by the brain, built from layers of weighted neurons (input, hidden, output) that pass activations forward when thresholds are met. Trained on labeled data, they tune weights to improve predictions excellent for supervised tasks like image, speech, or fraud/bot detection. A simple workflow: prepare labeled examples, extract informative features, learn weights, then generalize to new inputs. Despite strong performance, ANNs typically need lots of data and compute, and their decisions can be hard to interpret due to many interacting parameters.

Deep Learning

Deep learning extends ANNs by stacking many layers and letting the model learn features automatically especially powerful at scale with large datasets. Using forward propagation and backpropagation, deep models such as CNNs (images/audio) and RNNs (sequences/NLP) learn hierarchical representations and deliver state-of-the-art results across everyday applications (voice assistants, fraud detection) and advanced systems (self-driving). Compared to traditional ML, deep learning reduces manual feature engineering and handles unstructured data directly, trading interpretability for accuracy and performance.

Contributions & Artefacts

Third Weekly Discussion - Initial Post

Deep Learning has enabled the emergence of generative models such as OpenAI’s DALL·E, which can produce realistic images from text prompts, or ChatGPT, which generates prose. These models raise important ethical concerns, particularly around artistic rights and the nature of creativity and I would like to further look into the artistic rights perspective.

Generative models are trained on massive datasets of images or texts scraped from the internet, often without explicit consent from the original creators. This leads to unresolved issues of copyright and intellectual property. As Abbott and Rothman (2023) note, image synthesis models can generate works that closely resemble the style of identifiable artists, blurring the line between inspiration and infringement. Such outputs risk undermining the livelihoods of artists by reproducing their styles without attribution or compensation. Ethical frameworks need to ensure that artists’ rights are protected, that attribution is transparent, and that society maintains a clear distinction between recombination and genuine creativity (Al-Kfairy et al., 2024).

Beyond legal rights, there is also a philosophical question of what constitutes creativity. Generative models do not create in the human sense but remix statistical patterns from their training data. As Floridi and Chiriatti (2020) argue, these systems are fundamentally parasitic, as they depend entirely on pre-existing works to simulate novelty. Unlike human creativity, which involves intentionality, context, and meaning-making, AI-generated outputs reflect recombinations of prior artefacts. This challenges the cultural value we assign to originality and authorship.

Finally, the proliferation of such tools risks a shift in cultural production where efficiency and mass generation replace originality and craft. I am interested in hearing what my peers think about this.

Reflection

Weekly Reflection

Artificial Neural Networks were introduced as adaptive models for supervised learning. The initial post for Discussion 3 allowed me to reflect on their strengths and challenges, particularly around data and interpretability.

References

- University of Essex Online (2025) ‘Introduction to Adaptive Algorithms’ [Online learncast]. In: Intelligent Agents.

- Al-Kfairy, M., Mustafa, D., Kshetri, N., Insiew, M. and Alfandi, O., 2024, September. Ethical challenges and solutions of generative AI: An interdisciplinary perspective. In Informatics (Vol. 11, No. 3, p. 58). Multidisciplinary Digital Publishing Institute.

- Abbott, R. and Rothman, E., 2023. Disrupting creativity: Copyright law in the age of generative artificial intelligence. Fla. L. Rev., 75, p.1141.

- Floridi, L. and Chiriatti, M., 2020. GPT-3: Its nature, scope, limits, and consequences. Minds and machines, 30(4), pp.681-694.

Theory

How Deep Learning can improve productivity and boost business

Deep learning mimics the brain’s decision-making by learning from examples and identifying complex patterns in massive datasets. It drives everyday applications such as virtual assistants, fraud detection, chatbots, and medical diagnoses, while also enabling advanced use cases like self-driving cars. For businesses, deep learning boosts productivity and competitiveness by automating predictive analytics, uncovering customer trends, reducing costs, and supporting data-driven decision-making. However, challenges include high data demands, risks of bias, and the “black box” nature of its models, requiring strong governance and transparency measures.

Contributions & Artefacts

Third Weekly Discussion - Peer Responses

Peer Response 1

Hi Abdulla, thank you for your clear and informative post. I found your framing of misinformation, privacy, and environmental costs especially compelling, and I also liked how you connected these issues to the Responsible Innovation framework.

Building on your discussion of misinformation, Weidinger et al. (2022) propose a taxonomy of harms from large language models, ranging from disinformation and bias reinforcement to long-term risks of trust loss. Their work complements your point by underlining the need for systematic risk evaluation across technical, social, and cultural domains. Similarly, Solaiman et al. (2023) outline an evaluation framework for assessing generative AI’s societal impact, emphasising that governance cannot only be reactive but must also anticipate harms across creativity, labor, and equity.

Another area I want to pay more attention to is the creative domain. While misinformation and bias are pressing issues, generative systems also raise deep questions about artistic rights and cultural labor. As recent legal scholarship shows (Abbott and Rothman, 2023), the reproduction of styles without consent risks eroding recognition of human authorship.

Overall, I strongly agree with your conclusion that responsible innovation requires collaboration between developers and policymakers.

Peer Response 2

Hi Nikos, thank you for your insightful post. I especially appreciated how you tied copyright frameworks to the broader issue of cultural diversity. Your inputs around dataset transparency and fair remuneration is well taken, and aligns with current voices arguing that generative AI systems must be embedded within equitable governance structures. For example, Al-Kfairy et al. (2024) highlight how unresolved copyright and licensing mechanisms create systematic risks of uncompensated extraction that can erode creative work.

I would like to build on your point about the homogenisation of outputs. Hagendorff (2024) presents a scoping review of generative AI ethics, identifying originality and authorship as recurring concerns. If models privilege statistically common patterns, as you note with Doshi et al. (2024), they not only risk shrinking cultural expression but may also harm aesthetics at the expense of minority or experimental styles. Ethical frameworks must safeguard the diversity of cultural production.

At the same time, while opt-out and licensing mechanisms provide some protection, they may not resolve the underlying asymmetry between large technology firms and individual artists.

Deep Learning in Action

1) Overview of the technology Deep learning is increasingly embedded across industries. It is used in conversational agents to complex decision systems and one major enterprise platform leveraging it is Palantir’s Artificial Intelligence Platform (AIP). AIP integrates large language models (LLMs) into mission-critical workflows (such as defence, healthcare, and logistics) via a secure, auditable layer that blends AI with live operational data (Palantir, 2025). It enables users to query and act upon organisational data through natural language interfaces, effectively bringing deep learning into enterprise operations in ways previously confined to research labs (Palantir, 2025).

2) Brief synopsis of how it works AIP does not just expose models to raw data it incorporates LLMs within a broader operational infrastructure, including semantic ontologies, human-in-the-loop oversight, access controls, encryption, and full audit logging (Palantir, 2025). Users interact through GUI-built agents that map to the organisation’s ontology; outputs must pass human approval, ensuring the system stays within compliance and governance boundaries (Palantir, 2025). This architecture reframes deep learning not as autonomous intelligence, but as an orchestrated tool within controlled workflows.

3) Potential implications (ethics, privacy, civil rights) While AIP delivers operational efficiencies, its deployment has drawn intense criticism. In the UK, expansion of Palantir’s AI tools into the NHS and MoJ stirred concerns over privacy, transparency, and militarised surveillance, especially given Palantir’s intelligence-sector links (Financial Times, 2024; The Guardian, 2024). Civil rights advocates highlight the risk of unaccountable AI-driven decision-making, such as re-offending risk prediction or mass data consolidation, that could disproportionately impact vulnerable populations without public scrutiny (The Guardian, 2024; Guardian editorial, 2025). These concerns underscore the broader debate. Of course, embedding deep learning into public services may boost capability but it also threatens civil liberties if governance is insufficient.

Reflection

Weekly Reflection

Deep learning applications showed me how productivity and competitiveness can be enhanced. Responding to peers and reading about business use cases highlighted both the power and governance challenges of deploying these models.

References

- World Economic Forum. (2022) How Deep Learning can improve productivity and boost business

- Palantir (2025) Artificial Intelligence Platform (AIP). Available at: https://www.palantir.com/platforms/aip/ (Accessed: [24.09.2025]).

- Palantir (2025) AIP security and privacy. Available at: https://www.palantir.com/docs/foundry/aip/aip-security (Accessed: [24.09.2025]).

- The Guardian (2024) Tech firm Palantir spoke with MoJ about calculating prisoners’ ‘reoffending risks’, 16 November. Available at: https://www.theguardian.com/technology/2024/nov/16/tech-firm-palantir-spoke-with-moj-about-calculating-prisoners-reoffending-risks (Accessed: [24.09.2025]).

- The Guardian (2025) Pinto, J.S. ‘Palantir’s tools pose an invisible danger we are just beginning to comprehend’, 24 August. Available at: https://www.theguardian.com/commentisfree/2025/aug/24/palantir-artificial-intelligence-civil-rights (Accessed: [24.09.2025]).

- Weidinger, L., Uesato, J., Rauh, M., Griffin, C., Huang, P.S., Mellor, J., Glaese, A., Cheng, M., Balle, B., Kasirzadeh, A. and Biles, C., 2022, June. Taxonomy of risks posed by language models. In Proceedings of the 2022 ACM conference on fairness, accountability, and transparency (pp. 214-229).

- Solaiman, I., Talat, Z., Agnew, W., Ahmad, L., Baker, D., Blodgett, S.L., Chen, C., Daumé III, H., Dodge, J., Duan, I. and Evans, E., 2023. Evaluating the social impact of generative ai systems in systems and society. arXiv preprint arXiv:2306.05949.

- Abbott, R. and Rothman, E., 2023. Disrupting creativity: Copyright law in the age of generative artificial intelligence. Fla. L. Rev., 75, p.1141.

- Al-Kfairy, M., Mustafa, D., Kshetri, N., Insiew, M. and Alfandi, O. (2024) ‘Ethical challenges and solutions of generative AI: An interdisciplinary perspective’, Informatics, 11(3), p. 58. Multidisciplinary Digital Publishing Institute. doi:10.3390/informatics11030058.

- Hagendorff, T. (2024) ‘Mapping the ethics of generative AI: A comprehensive scoping review’, Minds and Machines, 34(4), p. 39. doi:10.1007/s11023-024-09693-0.

- Doshi, A.R., Tang, A., Leung, M., Lin, A., Melkonyan, A., Liu, A., Dorn, C., Sethi, A., Prasad, P., Xu, C., Bailey, J. and Yang, D. (2024) ‘Generative AI enhances individual creativity but reduces diversity’, Science Advances, 10(21), eadn5290. https://doi.org/10.1126/sciadv.adn5290

Theory

Industry 4.0

Industry 4.0 describes the digital transformation of manufacturing and production, driven by globalized markets, data ubiquity, and advances in intelligent technologies. Key enablers include robotics, IoT, 3D printing, digital twins, and advanced data analytics, which allow factories to be highly connected, automated, and adaptive. Concepts like digital twins and industrial IoT improve product lifecycles and predictive maintenance, while companies such as FANUC leverage AI for monitoring and failure prevention. Agent-based computing supports smart factories through autonomous, social, and cooperative systems that can self-organize, though coordination challenges remain. Beyond manufacturing, the financial sector uses intelligent technologies for credit scoring, fraud detection, and economic modelling, demonstrating how Industry 4.0 reshapes efficiency, productivity, and decision-making across domains.

Deep Learning in Action

Deep learning goes beyond data analysis to content generation, with applications ranging from rap lyric generation (DopeLearning) to AI-driven art creation (DALL·E). DopeLearning combines neural networks with ranking algorithms to predict and assemble rhyming lines, producing lyrics that even outperform human rappers in rhyme density. DALL·E, developed by OpenAI, uses GPT-3 and diffusion modelling to generate realistic images from text prompts by progressively degrading and reconstructing images, learning to align visuals with descriptive text. These systems highlight the creative potential of deep learning, showing how AI can extend into cultural production while raising questions about originality, authorship, and the social role of machine creativity.

Contributions & Artefacts

Third Weekly Discussion - Summary Post

Our discussion on deep learning based generative models has highlighted both their creative potential and the significant ethical issues they raise when it comes to originality and author. In my initial post, I focused on artistic rights, emphasising how models like DALL E reproduce styles without consent and challenge our understanding of originality (Abbott and Rothman, 2023; Floridi and Chiriatti, 2020). I argued that ethical frameworks must protect creators while maintaining a clear distinction between recombination and genuine creativity (Al-Kfairy et al., 2024).

Nikos expanded this perspective by stressing the importance of remuneration and dataset transparency. His point that generative AI risks narrowing cultural diversity by privileging statistically common patterns (Doshi et al., 2024) was particularly compelling. This suggests that economic protections alone are not enough (Hagendorff, 2024).

Abdulla provided a broader frame, connecting generative AI to misinformation, privacy, bias, and sustainability. He proposed the Responsible Innovation framework (Owen et al., 2013) as a guiding tool. I found it valuable that he reminded us of harms beyond creativity, especially misinformation and trust erosion (Weidinger et al., 2022). At the same time, I argued that cultural and artistic concerns must also be part of responsible innovation to ensure creators’ voices are not sidelined.

Overall, the consensus seems to be that generative AI is not inherently problematic, but its deployment requires robust governance that balances innovation with the protection of human creativity.

Reflection

Weekly Reflection

Industry 4.0 tied the ideas of intelligent agents back into real-world digital transformation. I found the examples of digital twins and self-organising factories especially relevant, and the summary post showed how deep learning can extend into creative and cultural domains.

References

- University of Essex Online (2025) ‘Intelligent Agents in Action’ [Online learncast]. In: Intelligent Agents.

- Abbott, R. and Rothman, E., 2023. Disrupting creativity: Copyright law in the age of generative artificial intelligence. Fla. L. Rev., 75, p.1141.

- Al-Kfairy, M., Mustafa, D., Kshetri, N., Insiew, M. and Alfandi, O., 2024, September. Ethical challenges and solutions of generative AI: An interdisciplinary perspective. In Informatics (Vol. 11, No. 3, p. 58). Multidisciplinary Digital Publishing Institute.

- Floridi, L. and Chiriatti, M., 2020. GPT-3: Its nature, scope, limits, and consequences. Minds and machines, 30(4), pp.681-694.

- Hagendorff, T., 2024. Mapping the ethics of generative AI: A comprehensive scoping review. Minds and Machines, 34(4), p.39.

- Weidinger, L., Uesato, J., Rauh, M., Griffin, C., Huang, P.S., Mellor, J., Glaese, A., Cheng, M., Balle, B., Kasirzadeh, A. and Biles, C., 2022, June. Taxonomy of risks posed by language models. In Proceedings of the 2022 ACM conference on fairness, accountability, and transparency (pp. 214-229).

Theory

The Future of Intelligent Agents

This week’s required reading by Nasim, Ali, and Kulsoom (2022) explores AI incidents through an ethical lens, highlighting challenges such as bias, accountability, and unintended consequences. To deepen understanding, self-directed reading is suggested using terms like future directions of AI, evolution of AI, and ethics of AI, encouraging broader exploration of both the opportunities and risks shaping AI’s development.

Contributions & Artefacts

This week, our work was dedicated to the submission of our e-Portfolio, with no other contributions or artifacts required.

Reflection

Weekly Reflection

The final week centred on ethics and the future of agents, which helped me step back and critically reflect. Reading about AI incidents and risks of bias underscored the importance of responsible design alongside the technical knowledge gained throughout the module.

References

- Nasim, S.F., Ali, M.R. and Kulsoom, U. (2022) 'Artificial Intelligence Incidents & Ethics A Narrative Review', IJTIM, 2(2), pp. 52-64.

Assessments

This module consist of the following three graded assessments

- A group project (20% of grade - due in week 6)

- An individual presentation (40% of grade - due in week 11)

- This e-Portfolio page alongside a personal reflection (40% of grade - due in week 12)

You can find more details about the team work and individual assessments on the following sub-pages: