RESEARCH METHODS AND PROFESSIONAL PRACTICE

Artefacts from this module

Background

This section of the e-portfolio documents my learning and development throughout the machine learning module, aligning with the specified requirements and grading criteria. It includes artefacts and reflections that demonstrate my progress, contributions, and insights gained during the module.

Weekly Entries

Contributions & Artefacts

Weekly Discussion 1 - Initial Post

The Accessibility in Software Development case demonstrates how neglecting professional ethics can result in social exclusion and reputational harm. AllTogether’s decision to release an inaccessible software version, despite awareness of its flaws, conflicts with several of the principles of the Association for Computing Machinery (ACM) Code of Ethics (ACM, 2025).

Taking a closer look at the principles, we can identify that it violates principle 1.1, which requires computing professionals to contribute to society and human well-being. Additionally, there is a conflict with principle 1.4, which calls on professionals to be fair and act not to discriminate.

By excluding users with disabilities from effectively using the tool, the company failed to uphold its ethical duty to promote equitable access to technology. In this way they have discriminated against a group and not contributed to society and human well-being.

Comparatively, the British Computer Society (BCS) Code of Conduct also stresses inclusion, integrity, and responsibility. Therefore again, this case clearly breaches the BCS Public Interest principle, which requires members to have due regard for the wellbeing of others and to promote equal access to the benefits of IT (BCS, 2025).

Furthermore, by prioritising commercial deadlines over compliance and accessibility, the company failed to demonstrate professional competence and integrity. Both would demand that computing professionals act within their competence and comply with relevant legislation and standards.

Ethics in Computing in the age of Generative AI

From late 2022, generative AI has accelerated long-standing debates about AI governance, highlighting that while core values such as transparency, accountability, and fairness are widely endorsed, there is little consensus on how these should be implemented in practice across different political and cultural contexts (Correa et al., 2023). As countries respond differently—ranging from precautionary regulation in the EU to market-driven approaches in the US and state-centric control in China—AI governance is increasingly shaped by geopolitical and economic interests rather than shared ethical frameworks (Deckard, 2023). In my view, a suitable course of action is a pragmatic, layered governance model that prioritises shared procedural standards (e.g. transparency, auditability, and accountability) while allowing contextual, risk-based regulation for high-impact uses of generative AI. This approach would help balance innovation with safeguards, strengthen public trust, and provide clearer professional guidance for computing practitioners, while acknowledging the legal and social realities of a fragmented global regulatory landscape (Correa et al., 2023; Deckard, 2023).

References

- Correa, N.K., Grohmann, R., West, S.M. and Crawford, K. (2023) ‘Governing artificial intelligence: Emerging principles and practices’, AI & Society, 38(2), pp. 1–15.

- Deckard, J. (2023) AI geopolitics: Power, regulation and the global order. Cambridge: Polity Press.

- ACM. (2025). ACM Code of Ethics and Professional Conduct. Association for Computing Machinery. Available at: https://www.acm.org/code-of-ethics

- BCS. (2025). BCS Code of Conduct. British Computer Society. Available at: https://www.bcs.org/membership-and-registrations/become-a-member/bcs-code-of-conduct/

Contributions & Artefacts

Weekly Discussion 1 - Peer Reponse 1

Thank you for your insightful post. You’ve presented an insightful analysis of the Blocker Plus case and effectively connected it to both the BCS Code of Conduct and wider AI governance principles. I especially agree with your interpretation that the failure to prevent bias and data poisoning represents a breach of the duty to act in the public interest (BCS, 2021). What really stands out to me is the tension between technical reliability and moral accountability. Once the model was compromised, the developers’ decision to preserve it prioritised operational continuity over social responsibility which was a choice that undermines public trust in AI systems.

Your link to Kluge Corrêa et al. (2023) is crucial because they highlight, voluntary ethical frameworks alone cannot ensure compliance. Which I also agree with. This case underscores the need for mandatory auditing and explainability provisions, such as those being institutionalised in the EU AI Act (European Commission, 2023). Embedding periodic bias audits and red-team testing could have mitigated the manipulation you describe.

I wonder how you view the role of external oversight. Do you think professional bodies like the BCS should be empowered to sanction such ethical violations, or should this fall to independent AI regulators? That distinction seems central to bridging the current gap between professional ethics and enforceable accountability.

Weekly Discussion 1 - Peer Response 2

You have provided a thoughtful and balanced interpretation of the Corazón case, particularly in recognising that ethical computing in healthcare extends beyond regulatory compliance. I agree with your observation that the company’s transparency and collaboration with external researchers demonstrate ethical maturity which is a principle directly aligned with the ACM Code of Ethics’ duty to avoid harm and maintain honesty in system reporting (ACM, 2018).

Your discussion raises an important tension between innovation and safety. As Camara, Peris-Lopez and Tapiador (2015) said, the same wireless connectivity that enables life-saving interventions also provides the attack surface for malicious exploitation. This duality makes proactive ethics essential. We must always aim at embedding ethics by design which can be achieved by incorporating security and privacy risk assessments throughout the product lifecycle (Floridi and Cowls, 2022).

One question your post implies is how far liability should extend when independent researchers uncover vulnerabilities. Should the responsibility remain solely with the manufacturer itself, or does the research community share a duty to disclose and mitigate risks responsibly? I think they would have to. What are your thoughts?

Literature Review and Research Proposal Outlines

| Question | Answer (Topic: Gender Pay Gap in the Technology Sector in Switzerland) |

|---|---|

| What is the focus and aim of your review? Who is your audience? | The review examines the persistence, causes, and implications of the gender pay gap within Switzerland’s technology sector. It aims to identify structural, cultural, and policy-related factors contributing to wage disparities. The intended audience includes academics, policymakers, HR professionals, and diversity advocates in the Swiss tech industry. |

| Why is there a need for your review? Why is it significant? | Despite Switzerland’s reputation for innovation and equality, data from the Federal Statistical Office (BFS, 2023) show a persistent pay gap in tech roles. The topic is significant because it intersects with issues of gender equity, economic competitiveness, and talent retention in a key national industry. |

| What is the context of the topic or issue? What perspective do you take? What framework do you use to synthesise the literature? | The review situates the issue within broader discussions on gender bias, labour market segmentation, and workplace culture in STEM fields. It adopts a socio-economic and organizational behaviour perspective, synthesizing literature using the Gender Inequality Framework and Human Capital Theory to connect structural and individual determinants. |

| How did you locate and select sources for inclusion in the review? | Academic databases such as Google Scholar, JSTOR, and the University of Essex Online Library were searched using keywords like “gender pay gap Switzerland,” “tech industry inequality,” and “STEM wage disparity.” Inclusion criteria focused on empirical studies, official reports (e.g., BFS, OECD), and publications from the last 10 years. |

| How is your review structured? | The review begins with an overview of the gender pay gap in Switzerland, followed by an analysis of sector-specific trends in technology. It then examines causes (education, experience, bias, work culture), evaluates policy and organizational interventions, and concludes with research gaps and recommendations. |

| What are the main findings in the literature on this topic? | Studies consistently show that women in Swiss tech earn 10–20% less than men with similar qualifications (OECD, 2023). Contributing factors include underrepresentation in leadership roles, part-time work patterns, negotiation differences, and unconscious bias in promotion and pay-setting processes. |

| What are the main strengths and limitations of this literature? | Strengths include the availability of quantitative wage data and detailed occupational analyses. Limitations include a lack of longitudinal studies, limited qualitative insights on workplace culture, and few cross-industry comparisons isolating technology from general STEM fields. |

| Are there any discrepancies in this literature? | Yes. While some authors attribute the pay gap primarily to structural bias and gendered socialization, others argue that career interruptions and self-selection explain most of the variance. There is also inconsistency in how bonuses and stock-based compensation are measured. |

| What conclusions do you draw from the review? What do you argue needs to be done as an outcome of the review? | The review concludes that the Swiss tech sector’s gender pay gap reflects systemic and cultural factors rather than mere individual choice. It calls for transparent salary reporting, gender-neutral recruitment, targeted mentoring programmes, and stronger enforcement of equal pay legislation to promote fairness and competitiveness. |

References

- BCS (2021) Code of Conduct. British Computer Society.

- European Commission (2023) Proposal for a Regulation Laying Down Harmonised Rules on Artificial Intelligence (AI Act). Brussels. Available at: https://digital-strategy.ec.europa.eu/en/library/proposal-regulation-laying-down-harmonised-rules-artificial-intelligence

- Corrêa, N.K., Galvão, C., Santos, J.W., Del Pino, C., Pinto, E.P., Barbosa, C., Massmann, D., Mambrini, R., Galvão, L., Terem, E. and de Oliveira, N., 2023. Worldwide AI ethics: A review of 200 guidelines and recommendations for AI governance. Patterns, 4(10).

- ACM (2018) ACM Code of Ethics and Professional Conduct. Available at: https://www.acm.org/code-of-ethics

- Camara, C., Peris-Lopez, P. and Tapiador, J. E. (2015) ‘Security and privacy issues in implantable medical devices: A comprehensive survey’, Journal of Biomedical Informatics.

- Floridi, L. and Cowls, J. (2022) A Unified Framework of Five Principles for AI in Society. Harvard Data Science Review, 4(1).

Contributions & Artefacts

Weekly Discussion 1 - Summary Post

The first discussion forum highlighted how professional ethics in computing must evolve from static codes into dynamic frameworks to ensure accountability. Across the Blocker Plus and Corazón case studies, a recurring theme emerged. A responsible way of innovating does not only demand technical excellence but also moral vigilance.

In the Blocker Plus discussion, the manipulation of machine-learning feedback loops exposed how algorithmic bias can undermine fairness and inclusivity. Even when systems comply with formal legislation it is possible that their outcomes can still conflict with the public-interest principle. This principle is one of the many principles found in the BCS Code of Conduct (2021). This aligns with Corrêa et al. (2023), who found that most global AI-ethics guidelines remain voluntary and lack enforceable accountability.

The Corazón case, on the other hand, exemplified proactive ethics through collaboration with researchers and transparent risk disclosure. Embedding an ethics by design approach during system development. This also aligns with what Floridi and Cowls (2022) said. Such an approach ensures that safety, privacy, and fairness are systematically integrated throughout the technology lifecycle rather than retrofitted after harm occurs.

Together, these cases reinforced my understanding that professionalism in computing means acting as both innovator and moral steward.

Research Proposal Review

| Question | Answer (Topic: Gender Pay Gap in the Technology Sector in Switzerland) |

|---|---|

| Which of the methods described in this week’s reading would you think would suit your purpose? | A mixed methods approach would best suit this study. Quantitative analysis can assess salary data, job levels, and gender distribution using statistical tools, while qualitative research can explore underlying causes such as workplace culture, career interruptions, or negotiation behaviour. This combination supports a more holistic understanding of both measurable disparities and the social dynamics behind them. |

| Which data collection methods would you consider using? | Secondary data from official sources like the Swiss Federal Statistical Office (BFS), OECD, and World Economic Forum would be used to analyse existing pay and employment statistics. Additionally, primary qualitative data could be collected through semi-structured interviews with employees, HR managers, or policymakers in the Swiss tech sector. This would reveal perceptions of fairness, barriers to promotion, and experiences related to gender equality policies. |

| Which required skills will you need to have or develop for the chosen project? |

The project will require several research and analytical skills:

|

References

- BCS (2021) Code of Conduct. British Computer Society.

- Corrêa, N. K. et al. (2023) ‘Worldwide AI Ethics: A Review of 200 Guidelines and Recommendations for AI Governance’, Patterns, 4(10), 100857.

Contributions & Artefacts

Literature Review Outline

This week I handed in my literature review outline to get formative feedback from the tutor.

Contributions & Artefacts

Case Study: Inappropriate Use of Surveys

The Cambridge Analytica case demonstrated how surveys can be misused to collect personal data at scale. Data was gathered through seemingly harmless Facebook surveys, which enabled access not only to participants’ data but also to data from their social networks without informed consent. This information was later used for political profiling and targeted advertising, particularly during the 2016 US presidential election and the UK Brexit campaign (Confessore, 2018; Isaak and Hanna, 2018).

Surveys were chosen because they appeared low-risk and encouraged participation while obscuring the true purpose of data collection. Similar practices have been observed in other digital platforms, where survey-like consent mechanisms and behavioural prompts contribute to extensive user profiling with limited transparency (European Data Protection Board, 2021).

Ethically, these cases highlight serious failures in informed consent and transparency. Socially, they have contributed to declining public trust in digital platforms and research practices. Legally, they have resulted in increased regulatory scrutiny and enforcement under data protection laws such as GDPR. From a professional perspective, these examples underline the importance of ethical research design, clear consent mechanisms, and responsible data governance.

References

- Confessore, N. (2018) Cambridge Analytica and Facebook: The scandal and the fallout so far. The New York Times, 4 April. Available at: https://www.nytimes.com/2018/04/04/us/politics/cambridge-analytica-scandal-fallout.html (Accessed: 02 January 2026).

- Isaak, J. and Hanna, M.J. (2018) ‘User data privacy: Facebook, Cambridge Analytica, and privacy protection’, Computer, 51(8), pp. 56–59. https://doi.org/10.1109/MC.2018.3191268

- European Data Protection Board (2021) Guidelines on consent under Regulation (EU) 2016/679 (GDPR). Available at: https://edpb.europa.eu (Accessed: 02 January 2026).

Contributions & Artefacts

Contributions

There were no specific week 6 atrefacts but rather working on the assessment due in week 7.

Theory

Contributions & Artefacts

Initial Post

Abi’s situation exposes a fundamental tension between using analytical flexibility and abusing it. While he is not altering data, selectively choosing analyses that present Whizzz in a favourable light is still an ethical breach because it intentionally exploits methodological ambiguity to guide readers toward a misleading conclusion. Gelman and Loken’s (2020) work on the garden of forking paths demonstrates how multiple plausible analytical decisions can lead to contradictory results, and how failing to disclose this flexibility constitutes a form of scientific misrepresentation.

From an ethical perspective, Abi is obligated to present both positive and negative analyses. Research integrity frameworks emphasise transparency, completeness, and the avoidance of harm especially when findings may influence the consumers health (Tennant, 2020). Withholding adverse nutritional findings is not a neutral omission as it directly increases the likelihood of consumer risk and undermines trust in scientific reporting.

Abi’s responsibility does not end with producing accurate statistics. If he expects the manufacturer to selectively report only favourable outcomes, he has a duty to mitigate foreseeable misuse. Contemporary perspectives in data ethics stress that researchers share responsibility for downstream impacts of their work and must adopt precautionary measures when results may be misrepresented (Floridi and Taddeo, 2021). This could include providing an integrated report where negative results cannot be detached from their context, documenting limitations explicitly, or seeking institutional guidance.

In short, transparency is never optional. It is a professional and ethical obligation. Allowing the client to selectively publish only favourable findings is not a neutral act but a clear deviation from responsible research practice.

Activity 7.1 & 7.2

As part of this unit’s activities, I completed the required analytical exercises and practical tasks, focusing on applying statistical and analytical methods to real datasets. This included calculating summary measures, conducting hypothesis testing, and interpreting results in a structured and critical manner. Throughout the process, I documented intermediate results, assumptions, and interpretations, ensuring transparency and reproducibility of the analysis.

Exa 7.1B

Exa 7.1B

Exa 7.2B

Exa 7.2B

Exa 7.3D

Exa 7.3D

Exa 7.4G

Exa 7.4G

Exa 7.4F

Exa 7.4F

Exa 7.6B

Exa 7.6B

Choosing the topic for your literature review and presentation

Because the topic can not be used for my Master Thesis / Master Project I decided to go with a topic which has little to no intersection with the areas I am thining of chosing for my Masters Thesis / Project.

- 5. What is the gender pay gap in the technology sector in the country of your choice?

Literature Review

References

- Gelman, A. and Loken, E. (2020). The garden of forking paths: Statistical analysis and the search for significance. In: Bond, H. and Dubois, D. (eds.) Beyond Multiple Linear Regression. Boca Raton: CRC Press.

- Tennant, J.P. (2020). The state of the art in peer review. FEMS Microbiology Letters, 367(6). Available at: https://academic.oup.com/femsle/article/365/19/fny204/5078345 (Accessed: 06.12.2025).

- Floridi, L. and Taddeo, M. (2016). What is data ethics? Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences, 374(2083), 20160360. Available at: https://doi.org/10.1098/rsta.2016.0360 (Accessed: 06.12.2025).

Contributions & Artefacts

Weekly Discussion 2 - Peer Response 1

Hi Gesine, Thank you for your insightful post. I’m with you on the key point that Abi can avoid outright fabrication and still behave unethically through method shopping. I want to even push the arguments a step further and say that “present both sets of results” is necessary but not sufficient. It still leaves room for too much interpretation. My proposal would be that Abi should distinguish confirmatory versus exploratory analyses and also disclose the degree of analytical flexibility used.

Gelman and Loken’s forking paths problem shows that even without p-hacking, a large room for choices can generate different conclusions and this is exactly why transparency about the analysis pathway should be included as well (Gelman and Loken, 2020).

I agree with your whistleblowing route, but I’d treat it as the last line of defence. Abi should reduce misuse earlier by writing a single narrative report including methods, pre-specified primary outcomes as well as sensitivity analysis. But also, Abi must explicitly add warning against cherry-picking in the executive summary. That aligns with the idea that responsibility includes the downstream impact of analysis, especially where consumer harm is plausible (Floridi and Taddeo, 2016).

Weekly Discussion 2 - Peer Response 2

Hi Abdullah, Thank you for your strong post. I like the distinction between legitimate sensitivity analysis and unethical selective framing a lot. That difference is often hand-waved away as exploration, when in reality the ethical issue is about intent and disclosure. The question is if we are trying to understand the data, or trying to manufacture a story.

One angle I would add is that transparency should not only mean to show both results but rather it should also mean making the analysis reproducible and auditable. One must clearly label what was pre-planned versus added later on, describe the full model-search approach and state how uncertainty should be interpreted. That is where good reporting practice and peer review norms matter. Transparency is an integrity mechanism, not a nice-to-have (Tennant, 2020).

I also like your action list. The only real-world wrinkle is contractual pressure. In commissioned research, the incentive gradient is tilted toward client satisfaction. That is exactly why early guardrails are a powerful tool. It is about not even offering selection as once the sponsor sees negative health implications, the risk of selective communication spikes.

Your recommendations are practical and ethically aligned. I would just make the preventative move more explicit and set constraints even before the analysis.

e-Portfolio Activities: Charts and Inference Worksheet

During this part of the module, I completed a series of practical exercises focused on statistical analysis and data visualisation using Excel (and LibreOffice where applicable). The activities involved constructing percentage frequency bar charts and clustered column charts to compare categorical data across different groups. I also created relative frequency histograms by defining appropriate class intervals, calculating frequencies and relative frequencies, and visualising distributions to analyse patterns in the data.

Exe 8.1D

Exe 8.1D

Exe 8.2D

Exe 8.2D

Exe 8.3B

Exe 8.3B

References

- Floridi, L. and Taddeo, M. (2016). What is data ethics? Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences, 374(2083), 20160360. Available at: https://doi.org/10.1098/rsta.2016.0360 (Accessed: 13.12.2025).

- Gelman, A. and Loken, E. (2020). The garden of forking paths: Statistical analysis and the search for significance. In: Bond, H. and Dubois, D. (eds.) Beyond Multiple Linear Regression. Boca Raton: CRC Press.

- Tennant, J.P. (2020). The state of the art in peer review. FEMS Microbiology Letters, 367(6). Available at: https://academic.oup.com/femsle/article/365/19/fny204/5078345 (Accessed: 13.12.2025).

Contributions & Artefacts

Weekly Discussion 2 - Summary Post

The responses to my initial post clarified that Abi’s dilemma is not only an individual ethical issue but a broader methodological topic including regulatory and systemic pressures. Both replies I received reinforced the idea that selective use of analytical flexibility can be ethically equivalent to data manipulation, even when the underlying data remain unchanged and are not manipulated (Gelman and Loken, 2020).

Gesine’s response extended the discussion beyond sole professional ethics into the legal and regulatory domain. By referring to EU General Food Law, she highlighted that whereever consumer health is concerned, scientific assessments are expected to be complete and risk-oriented, not selectively framed and interest oriented. This strengthens the argument that omitting adverse findings may constitute not only an ethical failure but also a breach of regulatory duties designed to protect consumers (European Union, 2002).

Abdullah’s contribution got deeper into the methodological dimension of the debate by emphasising the importance of distinguishing between confirmatory and exploratory analyses. This aligns with well with evidence on how undisclosed analytical flexibility and selective reporting distort the scientific record, even if there is no explicit faking of data (Head et al., 2015).

The discussion confirms that Abi’s responsibility extends beyond producing technically correct statistics. Ethical research practice requires anticipation of foreseeable downstream misuse of the data due to different interests of stakeholders.

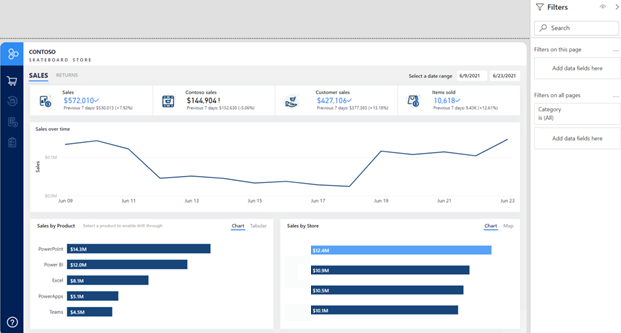

Practicing Business Visualisation with PowerBI

In this unit, I completed practical training focused on designing effective business reports using Power BI. The activities covered report structure, layout design, and the selection of appropriate visualisation types to communicate insights clearly to a target audience. I worked with report objects such as charts, tables, slicers, and filters, applying consistent formatting and interactive elements to improve usability and readability. As part of the lab exercise, I used the Power BI service to design an analytical report, applying filters and slicers to support user-driven exploration of the data. I also reviewed different filtering techniques and their implications for report clarity and performance.

We used the available PowerBI trainigns from Microsoft found under Design Power BI reports:

References

- Gelman, A. and Loken, E. (2020). The garden of forking paths: Statistical analysis and the search for significance. In: Bond, H. and Dubois, D. (eds.) Beyond Multiple Linear Regression. Boca Raton: CRC Press.

- European Union (2002) Regulation (EC) No 178/2002 of the European Parliament and of the Council. Available at: https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32002R0178 (Accessed: 13.12.2025).

- Head, M.L., Holman, L., Lanfear, R., Kahn, A.T. and Jennions, M.D. (2015) ‘The extent and consequences of p-hacking in science’, PLOS Biology, 13(3), e1002106. Available at: https://journals.plos.org/plosbiology/article?id=10.1371/journal.pbio.1002106 (Accessed: 13.12.2025).

Contributions & Artefacts

Research Proposal Assessement

Contributions & Artefacts

Contributions

There were no specific week 11 atrefacts.

Contributions & Artefacts

Contributions

The artefacts of week 12 consisted of this ePortfolio and a reflective pice

Assessments

This module consist of the following three graded assessments

- A literature review

- A research proposal presentation

- This e-Portfolio page alongside a personal reflection

You can find more details about the team work and individual assessments on this sub-page.